PET Scan Reliability: [18F]AlF-OC vs [68Ga]Ga-DOTA-SSA for NENs

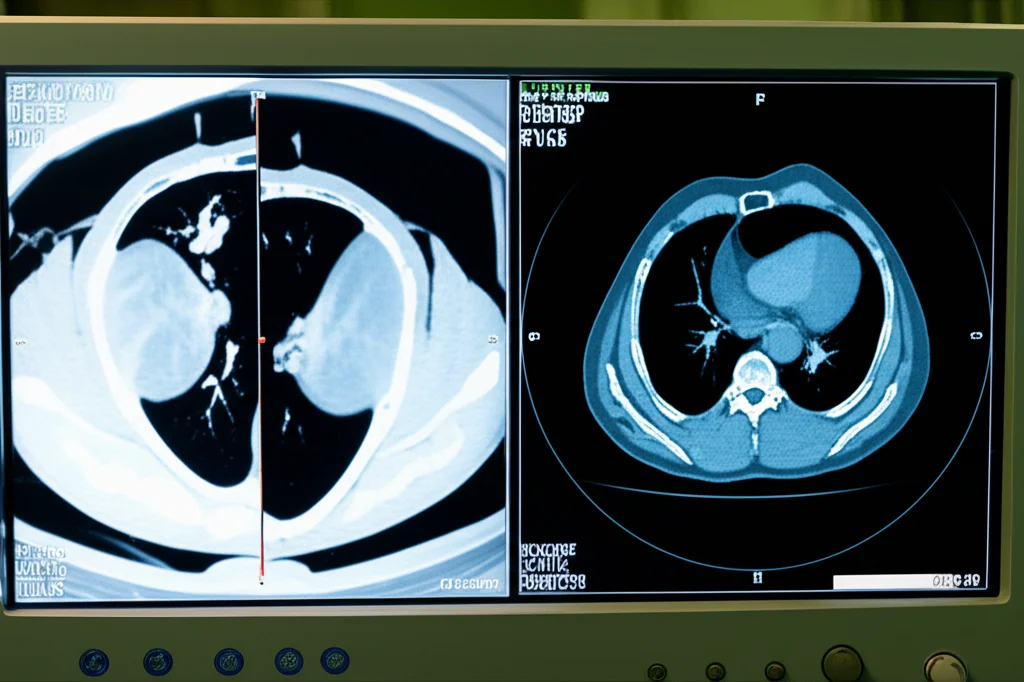

So, let’s talk about something pretty important in the world of medical imaging, specifically for folks dealing with Neuroendocrine Neoplasms, or NENs. If you’re not familiar, these are a group of tumors that often overexpress a thing called the somatostatin receptor (SSTR). And that’s where PET/CT scans using somatostatin analogues (SSAs) come in – they’re a cornerstone, a real big deal, in managing these patients.

For a while now, the go-to, the “gold standard” if you will, has been [68Ga]Ga-DOTA-SSA PET/CT. It’s been revolutionary, no doubt about it, helping doctors see and manage NENs like never before. But, like anything, it’s got its quirks. We’re talking high costs, generators that aren’t always easy to come by, a short tracer half-life (meaning you can only scan a few patients per batch), and a positron energy that can sometimes fuzz up the spatial resolution a bit.

Enter fluorine-18 labeled tracers! These guys have been getting a lot of buzz lately, and for good reason. They can be made with a cyclotron, which means higher production yields (way more patients per batch!), a more friendly half-life, and a lower positron range. What does that mean in plain English? Well, it means you can make a bunch in one place and send them out to centers that don’t have their own cyclotron or generator. Pretty neat, right?

One specific tracer, [18F]AlF-NOTA-octreotide (or [18F]AlF-OC for short), has been looking really promising. It’s got all those lovely fluorine-18 advantages and can be made using a standard, automated method. Early studies comparing it to the gallium-68 tracers showed similar safety and how it moves through the body.

Why Reader Agreement Matters

Now, here’s the thing. Having a great tracer is one part of the puzzle. But how reliably do different doctors, different readers, look at the scans and come to the same conclusions? That’s where interobserver agreement comes in. And how consistent is a *single* doctor when looking at the same type of scan over time? That’s intraobserver agreement. Both are super important for trusting a new tool in the clinic.

Previous work from our group (and others!) using data from a big multicenter trial (NCT04552847, if you’re into clinical trial IDs) already showed that [18F]AlF-OC was diagnostically superior, especially for liver metastases, and didn’t really change how most patients were managed compared to the gallium tracers. That’s a big step! But we wanted to really dive into how consistently readers interpret these scans.

The Study Setup

So, we took a look at the data from that same multicenter trial. We had 75 NEN patients who got *both* a [68Ga]Ga-DOTA-SSA scan (mostly DOTATATE, some DOTANOC) and an [18F]AlF-OC scan within about a month of each other. Five readers – a mix of experienced nuclear medicine physicians and one in training – independently reviewed the masked images. They looked for lesions, characterized them (was it benign? probably malignant? somewhere in between?), counted them, and scored how visible they were (conspicuity) using a 5-point scale. They also scored the overall image quality and something called the Krenning score, which helps decide if a patient might be a good candidate for therapy.

To figure out how much they agreed, we used a statistical measure called Gwet’s agreement coefficient. It’s a solid way to measure agreement, even if there’s missing data, and it’s often interpreted kind of like the Kappa statistic – higher numbers mean better agreement, with anything over 0.81 being considered “almost perfect.”

For the intraobserver part, two of the readers (both less experienced, which is a limitation we’ll touch on) re-read a random subset of 20 patients’ scans after a month to see if they were consistent with themselves.

What We Found: Interobserver Agreement

Okay, drumroll please… the results were pretty awesome! When looking at lesion characterization (deciding if something was benign or malignant), the interobserver agreement was almost perfect for *both* tracers across all organs. We’re talking Gwet’s coefficients over 0.9 for both the detailed 5-point scale and a simpler benign vs. malignant grouping. This tells us that regardless of which tracer was used, different doctors looking at the scans largely agreed on what they were seeing.

When it came to counting the number of malignant lesions, the agreement was still really good – substantial to almost perfect within individual organs. Across all organs combined, it was substantial for both tracers (around 0.73-0.74). We did notice slightly lower agreement for lymph nodes and peritoneal/omental lesions, which makes sense. Counting diffuse involvement can be tricky, and sometimes it’s hard to tell a lymph node from a peritoneal lesion without contrast CT, which wasn’t used here.

Interestingly, we didn’t find any big, clinically meaningful differences in agreement between the more experienced readers and the less experienced ones. That’s a great sign for both tracers!

Agreement on the Krenning score, which is super important for deciding on therapies, was also almost perfect for both tracers. Phew!

What We Found: Intraobserver Agreement

Consistency is key, right? So, how did the readers do when they looked at the same scans again later? Turns out, they were remarkably consistent! The intraobserver agreement for lesion characterization was also really high (>0.91) for both readers and both tracers. For lesion count, it was also strong (>0.79). This means that once a reader gets used to interpreting these scans, they are very likely to come to the same conclusions if they see them again.

Image Quality and Practical Perks

While the visibility of individual lesions (conspicuity) was pretty similar between the two tracers, there was a noticeable difference in overall image quality. [18F]AlF-OC scans got a significantly higher global image quality score (4.22 vs. 3.86). This could be partly due to the study protocols – we used a higher injected activity and longer scan times for the fluorine-18 tracer, leveraging its production advantages and longer half-life. It wasn’t about saying one tracer is *absolutely* better, but rather comparing how they perform using protocols optimized for their unique properties.

Speaking of those practical perks, the ability to produce [18F]AlF-OC in higher yields with a longer half-life means it’s easier to get this tracer to more places. That’s a big win for accessibility!

Putting It All Together: Clinical Impact

So, what does all this agreement talk boil down to for patients and doctors? Well, high interobserver agreement means you can trust the scan results regardless of which qualified doctor is reading them. This consistency is vital for adopting any new diagnostic tool. Our study, which is actually the largest of its kind looking at reader variability for SSTR PET and the first to compare two different tracers in the same NEN patients, shows that [18F]AlF-OC is just as reliable as the current gold standard, [68Ga]Ga-DOTA-SSA, when it comes to how consistently readers interpret the findings.

The excellent intraobserver agreement further reinforces that individual readers are consistent over time. Add in the slightly better global image quality and the practical advantages of fluorine-18 production and distribution, and you’ve got a really strong case for [18F]AlF-OC being a fantastic alternative for routine clinical use.

Acknowledging the Nuances

Of course, no study is perfect. Our intraobserver analysis only included two less-experienced readers, though their consistency suggests more experienced readers would be just as good, if not better. Also, we weren’t specifically powered to find tiny statistical differences in agreement *between* the tracers, but rather to show that [18F]AlF-OC is reliable. And as mentioned, the protocols for each tracer were optimized for their characteristics, so it’s a comparison of clinical *use* rather than a head-to-head comparison of the tracers under identical, potentially suboptimal, conditions for one or the other.

The Bottom Line

To wrap it up, what we found is that [18F]AlF-OC is right there with [68Ga]Ga-DOTA-SSAs in terms of how consistently different readers, and the same readers over time, interpret the scans for NEN patients. With its practical advantages and slightly better image quality, [18F]AlF-OC is definitely stepping up as a validated, reliable SSTR PET tracer that can be used alongside the current standard in everyday clinical practice. That’s great news for improving access to this important imaging technology!

Source: Springer

![Immagine fotorealistica di una scansione PET cerebrale che mostra l'attività della P-glicoproteina alla barriera emato-encefalica, con il tracciante [18F]MC225 evidenziato, obiettivo 50mm, alta definizione, illuminazione drammatica per enfatizzare i dettagli scientifici.](https://scienzachiara.it/wp-content/uploads/2025/05/043_immagine-fotorealistica-di-una-scansione-pet-cerebrale-che-mostra-lattivita-della-p-glicoproteina-alla-barriera-emato-encefalica-con-il-274x300.webp)